Linear regression is one of the simplest and most widely used techniques in machine learning and statistics. It models the relationship between a dependent variable and one or more independent variables. This article explores the fundamentals of linear regression, its types, applications, and how to implement it. Visit The Coding College for more tutorials and resources.

What is Linear Regression?

Linear regression is a predictive modeling technique that assumes a linear relationship between the input (independent) variable(s) and the output (dependent) variable.

Key Concept

The goal is to find the best-fit line that minimizes the difference between predicted and actual values.

The equation of a simple linear regression model is: y=mx+b

Where:

- y = Dependent variable (target)

- m = Slope of the line (coefficient)

- x = Independent variable (input)

- b = Intercept

Types of Linear Regression

- Simple Linear Regression

- Involves one independent variable.

- Example: Predicting house price based on its size.

- Multiple Linear Regression

- Involves two or more independent variables.

- Example: Predicting house price based on size, location, and age.

Assumptions of Linear Regression

For linear regression to work effectively, certain assumptions must hold:

- Linearity: The relationship between input and output is linear.

- Independence: Observations are independent of each other.

- Homoscedasticity: The variance of residuals is constant.

- Normality: Residuals follow a normal distribution.

- No Multicollinearity: Independent variables should not be highly correlated.

Applications of Linear Regression

- Finance

- Predicting stock prices or risk assessment.

- Healthcare

- Analyzing the effect of medication dosage on recovery time.

- E-Commerce

- Forecasting sales based on advertising expenditure.

- Education

- Predicting student performance based on study hours.

How Linear Regression Works

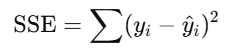

Linear regression works by finding the best-fit line that minimizes the sum of squared errors (SSE):

Where yiy_i is the actual value, and y^i\hat{y}_i is the predicted value.

The coefficients (mm and bb) are calculated using optimization techniques like Ordinary Least Squares (OLS).

Implementing Linear Regression in Python

Here’s a simple example:

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

from sklearn.metrics import mean_squared_error

# Sample Data

X = np.array([1, 2, 3, 4, 5]).reshape(-1, 1) # Independent variable

y = np.array([3, 4, 2, 5, 6]) # Dependent variable

# Model Training

model = LinearRegression()

model.fit(X, y)

# Predictions

y_pred = model.predict(X)

# Results

print("Coefficient (m):", model.coef_[0])

print("Intercept (b):", model.intercept_)

print("Mean Squared Error:", mean_squared_error(y, y_pred))

# Visualization

plt.scatter(X, y, color='blue', label='Actual')

plt.plot(X, y_pred, color='red', label='Predicted')

plt.title("Linear Regression Example")

plt.xlabel("Independent Variable (X)")

plt.ylabel("Dependent Variable (y)")

plt.legend()

plt.show()Strengths of Linear Regression

- Simplicity: Easy to understand and implement.

- Efficiency: Fast and computationally inexpensive.

- Interpretability: Coefficients provide clear insights into the relationship between variables.

Limitations of Linear Regression

- Sensitive to Outliers: Outliers can significantly affect the model.

- Assumes Linearity: Poor performance if the relationship is non-linear.

- Limited Flexibility: Not suitable for complex relationships or high-dimensional data.

Real-World Example: Predicting House Prices

Dataset

- Size: 100 houses.

- Features: Square footage, number of bedrooms.

- Target: Price.

Approach

- Load the data and preprocess it.

- Use multiple linear regression.

- Evaluate the model using metrics like R² and Mean Squared Error (MSE).